“When You Talk to People About Fairness, the Conversation Immediately Becomes Political”

getAbstract: Michael and Aaron, has either of you ever felt an algorithm wronged you?

Michael Kearns: Even though we don’t think we have been treated unfairly, we may not know it. I certainly don’t think I’ve been a victim of some of the worst cases that we talk about in the book, like unfairly being denied a loan or given a criminal sentence that I didn’t deserve. But I have definitely – like many internet users – raised my eyebrows when ads pop up for things I had never searched for on Google itself, but somehow it knew that I might be interested in such a product via some other channel.

Aaron Roth: Privacy violations have become so ubiquitous that it hardly seems notable or personal that you might have experienced one. But here is an example that springs to my mind from grad school: On Facebook, they have this feature “people you might know.” They mostly suggest people who are reasonable, but in this case, they suggested I might want to be friends with my landlord. And it is unclear how they could have connected us because the relationship that I had with my landlord was entirely offline. I would occasionally send her a check for the rent, we had no friends in common on the social network, we had exchanged emails on Google, not on Facebook, and I had not provided Facebook access to my email records. So this stood out to me.

If we stop noticing most violations of privacy, as you say, are they then still violations? Or is our concept of privacy eroding?

Roth: For sure, privacy protections are quickly eroding. But it’s not that people don’t care, it’s largely that they’re unaware. Every once in a while, people become aware of how much is known about them. There was an incident that was popular in the press, maybe five years ago, about the retailer Target. They had sent an advertisement for products for pregnant women in the mail to a young woman who still lived with her parents. Her family did not know she was pregnant. And Target had figured this out – by essentially using large-scale machine learning, where an algorithm, or ‘model,’ is derived automatically from large amounts of data – to correlate the purchases that she had made with the purchases of other customers.

Data mining is happening all the time, and usually, people don’t notice. But when an incident like this comes to people’s attention, they’re still outraged.

Aaron Roth

Is it now futile to hope companies will take better care of our privacy?

Roth: I don’t think it’s a lost cause. First, because people do care about privacy, companies can make privacy a selling point. One of the ways Apple brands itself as distinct from Google is by saying that they offer stronger privacy protections – and that’s true to an extent. They use several technologies that we talk about in the book. For example, differential privacy. This is where they compare data and information on someone with data that does not tell them much about that person. Second, as privacy-preserving technologies like differential privacy mature and become feasible without destroying the business model of companies that want to use machine learning, it becomes more and more possible to modernize privacy regulation to require the use of these technologies.

Kearns: Clearly, regulators care about privacy, and they care increasingly, as demonstrated by the GDPR (General Data Protection Regulation) in Europe, and at least at the state level in the United States, like the California Consumer Privacy Act. We know, just from professional experience, by being asked to talk to regulators and the like, and being asked by people in Congress in the United States, that there is increasing alarm over privacy erosion, and an increasing appetite to take concrete regulatory action against those erosions.

Roth: The use of differential privacy in the 2020 Census that’s coming up is going to be a good test case. That will be an example of the use of this technology on a large scale by a US government agency. If that goes successfully, I think it will make it much easier to think about and talk about technologies like this in privacy regulation.

How does differential privacy work, for instance, with the data troves that the US Census Bureau is holding?

Roth: Well, it has always been required by law to protect privacy; it’s just that the law doesn’t specify exactly what that means. They’ve never released raw data. They’ve always imposed some kind of attempt to anonymize the data. But up until this year, they’ve been using heuristic techniques that don’t work very well. Before, they’d compute statistics after randomly swapping families from different neighborhoods. The effectiveness of this kind of technique relied on the secrecy of what they were doing. So nobody really knew how accurate the released statistics were. Starting this year, they’re going to use differential privacy. There’s no swapping families around, the statistics that they want to release will be computed exactly, and then perturbed with some amount of noise.

What exactly is different, now?

Roth: It’s different in two ways, both of which are good. First, it provides formal guarantees: You don’t have to worry that someone cleverer will figure out how to undo your protections and break through them. Second: Because the process is not secret, it’s now possible to do more rigorous statistical analysis on the actual data that’s being released, for example to calculate the accuracy and therefore quantify the uncertainty in the statistics that the privacy protections are adding.

Kearns: A very powerful use of differential privacy, which we’re hoping to collaborate with the Census on, is creating synthetic data sets. You start with raw census data and produce a fake data set. So the data don’t correspond to real people at all, but in aggregate, the synthetic data set preserves desired statistical properties. And then people outside the Census can take that data set and do whatever they want with it – still privacy is preserved.

It is good to see the implementation of such techniques that are capable of maintaining people’s privacy. In your book, however, you describe a real struggle with other ethical considerations, right?

Roth: Yes that’s right.

Of the areas we refer to as ethical algorithm design, privacy is the most mature.

Aaron Roth

We know that the study of algorithmic fairness will necessarily be messier than privacy. In our view, and the view I think of all our colleagues, differential privacy is kind of the right definition of privacy, in the sense that it provides very strong guarantees to individuals yet it still permits you to do very powerful things with data. And it’s a single definition that gives you a very general, powerful notion of privacy.

What about fairness?

Roth: We already know that fairness won’t be like that. However mature it eventually gets, we will always have multiple, potentially competing definitions of fairness. And even within a single definition, there might be quantitative trade-offs between the fairness you provide to different groups. Providing more of some type of fairness, for example by race, might mean that you have less fairness by gender. This isn’t about the state of our knowledge, and it’s not that we don’t know how to handle this yet; these are actual realities. That’s why the study of fairness is more controversial then privacy.

How so?

Roth: If you ask people, “Is privacy a good thing, if you could have more privacy while still having all of the services you’re used to and know and love, like social networks and search and email?”, they would say: “Yeah, why not?” But when you talk to people about fairness, the conversation immediately becomes, for lack of a better term, political. Because right away, it’s like: Who are you trying to protect with a definition of fairness? What amount of protection should they get? And why do they need protection in the first place – is it because they are genuinely disadvantaged now, or are we trying to redress a past injustice?

When you present your book and discuss the issues, does it surprise you how people perceive privacy and fairness?

Roth: As scientists we think: These are both interesting and important social norms, and we want to make a science out of embedding them in algorithms. And so to us, there is a similarity between them. But if you go out into the world and talk to people about them, people have much stronger feelings about fairness and whether we should be enforcing it and for whom, than they do about privacy.

How do you deal with that political aspect? Do you try to exclude it or formalize it?

Kearns: We think it’s important for people like us to delineate the parts that can and should be made scientific and also delineate the things that are still thorny problems that society has to decide. And in the case of fairness, we would put it in the latter category. The part society has to agree on. Not society at large, but the stakeholders in any particular application domain.

What type of fairness do you choose to implement, and who are you trying to protect? And once you make those decisions, people like us can help you get them in your algorithms.

Michael Kearns

Roth: One of the scientific questions is to elucidate trade-offs. And trade-offs are inevitable between particular notions of fairness and predictive accuracy when you’re training a machine learning algorithm. And also between different things you might reasonably mean and want when you say “fairness.” The trade-offs are going to be there no matter what, even if you don’t talk to scientists. But if you talk to scientists, you can make the decision with your eyes open.

So there are different kinds of fairness? Are some kinds easier to implement than others?

Kearns: The most common type of fairness definition is one in which you try to protect very broad groups against some kind of statistical harm. In lending, you might say about false rejections for a loan – you deny somebody the loan who would have repaid it – that the rate at which you caused that harm to white people and black people has to be the same. These types of definitions are relatively easy to implement, because you’re only worried about very broad statistical categories with lots of people in them. Now your model is going to make mistakes, and if you are a black person who was falsely rejected for a loan, you’re supposed to feel better by this definition because white people are also being falsely rejected for loans at the same rate. That is cold comfort. But these are relatively easy constraints to enforce in a machine learning algorithm.

What is harder to implement?

Kearns: Harder to implement – and this is where the science also gets more challenging and therefore more interesting – is where you are trying to make things that are closer to guarantees to individuals. That’s been the focus of a lot of our research in the past several years. Those definitions are more challenging, both from a statistical and from an algorithmic standpoint, because you are looking at much finer-grained resolution than these crude aggregate statistical guarantees.

So, will an algorithm ever be able to guarantee fairness towards me?

Kearns: My answer to that would be no. Not in very general circumstances. You can write down mathematical models and assumptions under which you can provide those kinds of guarantees, but unfortunately, those kinds of assumptions usually are violated on real data.

Roth: Rather than say “no,” I would say “maybe.” Machine learning is inherently statistical. And so it is challenging when you are talking about promises to individuals. We are trying to find the right definitions to balance these two desiderata. That’s the kind of thing that people like us are writing academic papers about, but turning any of these into actionable technologies is probably five to 10 years away.

Machine learning mimics the pattern-searching and model-building that people do – with sometimes the same unethical results. Should people be aware of the algorithms their brain is processing?

Kearns: Such self-awareness, or the ability to actually reason about the “algorithms” we use to make decisions in our heads, would be hard. We would certainly not expect to get a description of those algorithms that would approach the precision we have when we code algorithms. But another experience we had in talking about the book, is having to remind people that – taking the consumer lending example again – it is not the case that algorithms introduce racial bias into lending. There has been racial bias in lending historically before computers made such decisions. It’s just that we never before had a precise centralized description of the “algorithm” that was used historically when humans made lending decisions. Even short of actually understanding what that human algorithm or collective algorithm looked like, it’s important to remember that things like the trade-off between accuracy and fairness exist, whether we’re talking about code or a group of loan officers making decisions. The certain thing is that you can do it at scale: You can have the same algorithm, or model, make the lending decisions for an unlimited number of people very rapidly, compared to the old days, where each loan application had to be looked at by a human.

Even if algorithms don’t introduce discrimination into lending, they can now implement it at scale. And when something is done at scale, it makes it harder to overturn or get recourse on a decision.

Michael Kearns

You mean: In the old days, if you thought maybe you were denied a loan because the loan officer was racist, you could try to find another loan officer who wasn’t racist?

Kearns: Exactly. But if it’s a single algorithm, and every bank is using it, there’s nothing you can do anymore. So the algorithm becomes kind of a single point of failure. But there’s also an opportunity because if you can fix the algorithm, or make it better, there is also a single point of improvement.

Do developers always understand what their algorithms are doing?

Roth: In machine learning, you specify only a very simple objective function. Usually, the goal is to minimize the number of mistakes the algorithm makes, or maximize revenue. And then you have the computer systematically search over some enormous “space” of models, trying to find the one that does the best, according to this narrow objective function. This methodology is very powerful, and it lets us do all sorts of things that we couldn’t do before, but it means that it’s very hard to predict anything about the model that you get as output, except that it will be very good – as measured by the narrow objective function that you specified.

What if you measure it against other objectives?

Roth: It’s hard to predict and understand the other properties it might have, and what it means is that there can be side effects that manifest themselves as, for example, discrimination or privacy violation that were not intentional.

There was no racist software engineer who decided that he was going to come up with a discriminatory algorithm.

Aaron Roth

The reason this makes things complicated is that because these problems are arising unintentionally, to begin with. It’s not enough to say, “OK, well, we’ll have ethics training for our software engineers, so they don’t come up with racist algorithms.” We have to develop a science to figure out how to use machine learning without these side effects emerging.

And we as citizens have to be vigilant about the outcomes, keeping track of what the algorithms do, as in the way we keep track of how our politicians vote?

Roth: Exactly. Because there is this misguided popular feeling that algorithms – because they are, in a sense, mathematical – are inherently objective or unbiased. We try to disabuse people of that notion in our book. The point is that, just as with human decision makers, we should care about the outcomes that result when we use these algorithms in aggregate. And we want to be alert for the possibility that they might be doing things we don’t want.

This is even more pressing when smart algorithms evolve into actual artificial intelligence. Will your research help keep AI on the straight and narrow?

Roth: That’s probably a very long way off. We’re nowhere near close to understanding what intelligence is. What machine learning has become very good at, is a much more narrow task, basically just classification. Ethical algorithm design, embedding things like privacy and fairness into these algorithms, is also a much more narrowly scoped study than designing computers that can think. It’s certainly not the path to designing general artificial intelligence. But as we start increasingly automating things – and these are the preliminary steps, maybe, to artificial intelligence – the need to make sure that algorithms do not have unintended side effects will only become more important.

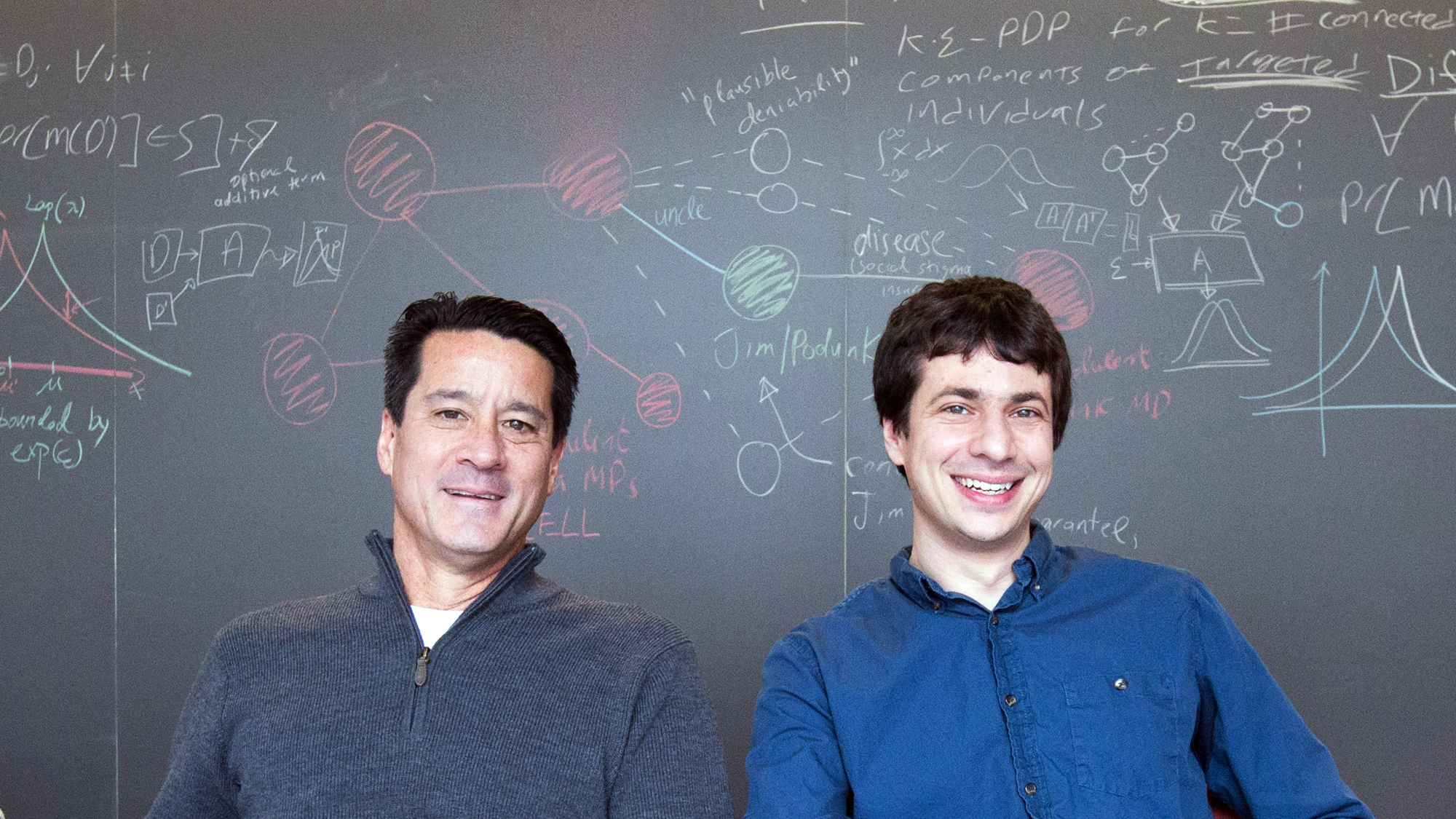

About the authors

Michael Kearns, PhD, is a professor of computer and information science at the University of Pennsylvania. He has worked in machine learning and artificial intelligence research at AT&T Bell Labs. Aaron Roth, PhD, is an associate professor of computer and information science at the University of Pennsylvania.